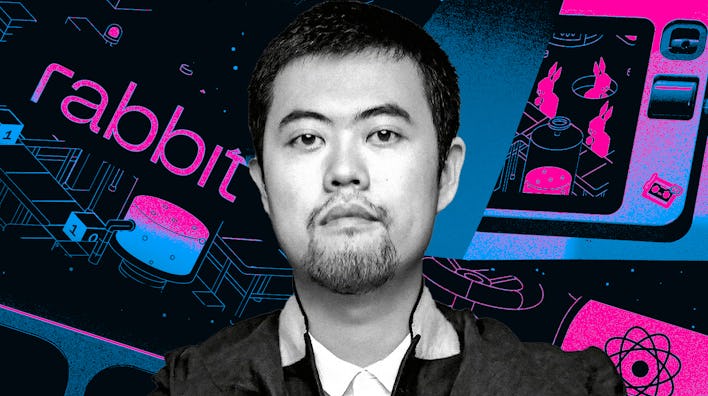

Inside the Rise of Jesse Lyu and the Rabbit R1

Rabbit’s founder and CEO, Jesse Lyu, tells all about the origins of the R1 and what he thinks about the AI gadget competition.

Building the World of 'Fallout'

'Dead Boy Detectives' Is A Spooky, Sweet Addition To The Sandman Universe

The Latest

The Forgotten, Evolutionary Reason Cats Love Being So High Up

Flesh and Blood

Netflix’s Most Unsettling Thriller Reveals a Painful, Overlooked Issue

'Shogun' Followed Its Own Rules Right to the Very End

Inside the Quest to Confirm A Strange 60-Year-Old Theory About Stonehenge

‘Fallout 4’ Is A Buggy Mess After Its Next-Gen Update

Could 'Doctor Who' Finally Explore All The Secret Regenerations?

How Ramin Djawadi Built the Sonic World of 'Fallout'