Clues About Apple's "Revolutionary" AR Glasses Revealed in Renewed Patents

More hints emerge.

The famous Apple soothsayer, Ming-Chi Kuo, claims that Apple’s “next-generation revolutionary user interface” will be a pair of augmented reality glasses. The Cupertino-based company is expected to launch a pair of as early as 2020, and a recent patent renewal suggests that the new glasses are already starting to take shape.

A continuation patent published by the United States Patent and Trademark Office offers more evidence that Apple is cooking up AR spectacles, and renews the tech giant’s claim on a patent first granted in July 2017. The proposed system focuses on superimposing digital markers over a filed of view and allowing users to interact with them using gesture controls.

There are lots of possible uses outlined in the patent. It could serve as a potential tour guide, using its system to highlight and annotate points of interest or offer directions when a user is touring a new city. It might even be used to locate a lost device at home. A majority of the blueprints in the patent depict the AR system as running on an iPhone, but the final two sketches show the wearer using fully AR-enabled glasses that don’t require a second device at all.

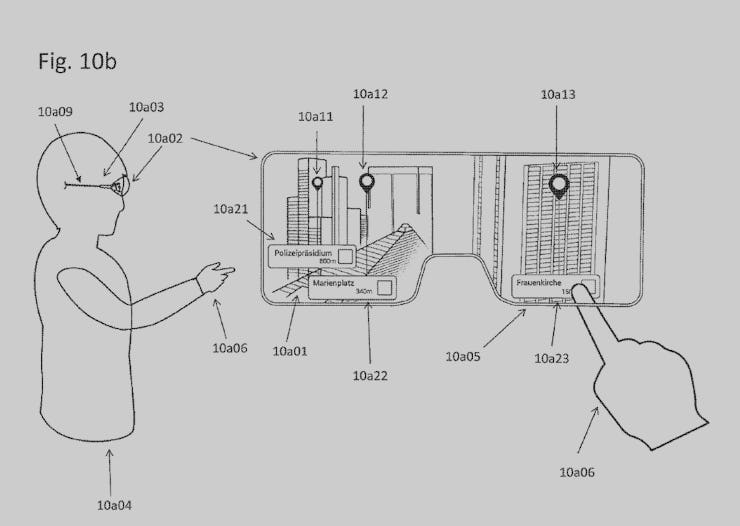

The user 10a04 has to move his hand 10a06 up such that the user's finger could overlap with the annotation 10a23 blended in in the view on semi-transparent screen 10a02 in order to interact with the corresponding point of interest (POI), such as triggering a program related to the corresponding POI running on the head-mounted display 10a03.

The document admits that the system would be easier to implement on a smartphone, which already has a built-in computer capable of processing real-time data. Adding an on-board computer to a pair of glasses without making them as bulky — as has been the case with some early launches like Microsoft’s HoloLens 2 — is considerably more more of a hardware challenge. But Apple doesn’t discount it as a possibility. Here’s the patent:

…The present invention could also be applied to representing points of interest in a view of a real environment using an optical-see-through device. For example, the optical-see-through device has a semi-transparent screen, such as a semi-transparent spectacle or glasses.

Other patents and previous reports have painted a more detailed picture of the kind of device that could be announced, possibly within the year. In 2017, veteran Apple reporter Mark Gurman found evidence that the company was working on a “reality operating system” — rOS for short — for some kind of headset or glasses product.

The glasses on a charger.

Apple is also believed to be working on giving future iPhones a time-of-flight depth sensor that functions a lot like the lidar technology found in many autonomous cars. The tech uses a laser to determine how far away an object is based on how fast the light from the laser takes to bounce back. This could drastically improve how well an iPhone overlays digital markers on real-time footage.

Finally, Apple is already hard at work recruiting augmented reality developers to its ARKit platform, which took center stage at last year’s Worldwide Developer Conference, an important first step toward creating as robust marketplace for AR Applications as it has for iOS. They also introduced ARKit 2, which, among other things, introduced collaborative AR to iOS developer’s toolkit.

As Apple’s AR universe continues to expand from relatively rudimentary applications like digital tape measures and block-building games, it’s going to need a more carefully tailored piece of hardware to host all of it. That new hardware could be arriving within a year which, given Samsung’s impressive new smartphone roster, won’t be not a moment too soon.