Neural networks are pivotal to the future of A.I. and, according to Elon Musk, the future of all of humanity. Luckily, Google’s DeepMind just cracked the code to making neural networks a lot smarter by giving them internal memory.

In a study released in Nature on October 12, DeepMind showed how neural networks and memory systems can be combined to create machine learning that not only stores knowledge, but quickly uses it to reason based on circumstances. One of the biggest challenges with A.I. is getting it to remember stuff. It looks like we’re one step closer to achieving that.

Called differentiable neural computers (DNCs), the enhanced neural networks function much like a computer. A computer has a processor to complete tasks (a neural network) but it takes a memory system for the processor to perform algorithms from different data points (the DNC).

Prior to DeepMind’s innovation, neural networks have had to rely on external memory so as to not interfere with the network’s neuron activity.

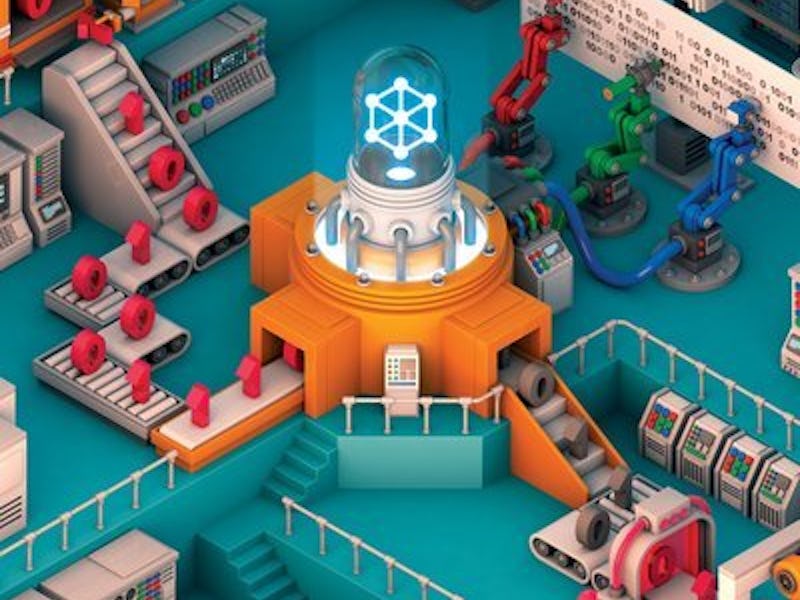

An illustration of how the DNC works.

Without any external memory, neural networks are only capable of reasoning a solution based on known information. They need massive quantities of data and practice in order to become more accurate. Like a human learning a new language, it actually takes time for neural networks to become smart. It’s the same reason DeepMind’s neural network is great at Go but terrible at the strategy-based game Magic: Neural networks just can’t process enough variables without memory.

A.I. would suck at Magic but kill you at Go.

Memory allows neural networks to incorporate variables and rapidly analyze data so that it can graph something as complex as London’s Underground and be able to make conclusions based on specific data points. In DeepMind’s study, they found that a DNC could learn on its own to answer questions about the quickest routes between destinations and at what destination a trip would end just by using the newly presented graph and knowledge of other transportation systems. It could also deduce relations from a family tree with no information presented except the tree. The DNC was able to complete a goal to a given task without being fed the additional data points that would be needed by a traditional neural network.

While that might not seem terribly impressive (Google Maps is already pretty good at calculating the most efficient route somewhere), the technology is a huge step for the future of A.I. If you think predictive search is efficient (or creepy), imagine how good it could be with neural network memory. When you search Facebook for the name Ben, it will know by the fact that you were just on a mutual friend’s page looking at a picture of him that you mean Ben from down the street not Ben from elementary school.

Natural language learning A.I. would finally have enough context to operate on both the language of the Wall Street Journal and be able to understand Black Twitter. Siri could understand that Pepe the Frog is more than just a character from a comic strip because she’s read every Inverse article about it.

“I am most impressed by the network’s ability to learn ‘algorithms’ from examples,” Brenden Lake, a cognitive scientist at New York University, told Technology Review. “Algorithms, such as sorting or finding shortest paths, are the bread and butter of classic computer science. They traditionally require a programmer to design and implement.”

Giving A.I. the ability to understand context allows it to skip the need for programmed algorithms.

While DeepMind’s DNC isn’t the first experiment in neural memory, it is the most sophisticated. That said, the neural network is still in its early stages and it has a long way to go before it’s at human-levels of learning. Researchers still need to figure how to scale up the systems processing so that it can scan and calculate using every piece of memory quickly.

For now, humans get to reign supreme neurologically.