New "Memristor" Chip Processes Images Just Like the Brain

We're one step closer to true brain-like computer architecture.

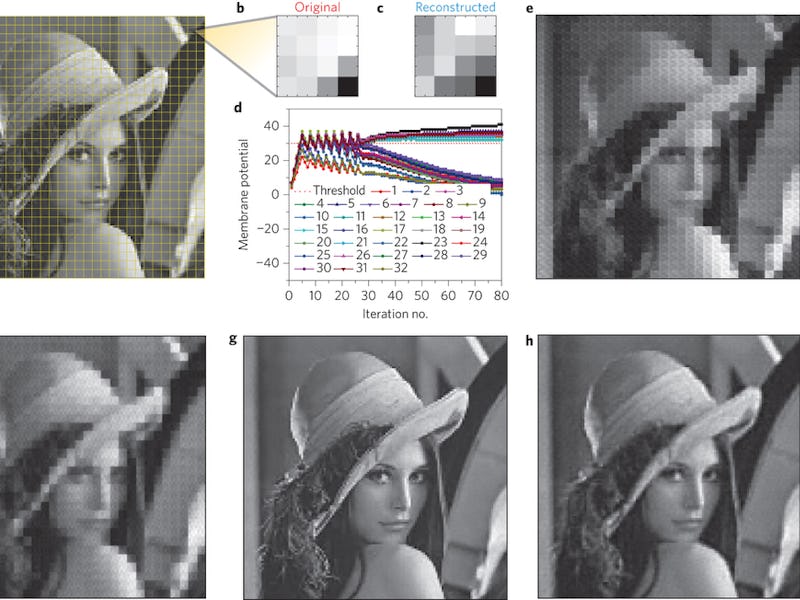

As the name implies, neural networks are supposed to be networks of computing “neurons” that mimic the organization of the brain, yet this week researchers from the University of Michigan claimed to have invented a new computer chip that’s truly based on the brain’s structure. By processing images the same way the brain does, their 32-by-32-neuron “neuromorphic” chip seems to be able to classify images much more efficiently than its predecessors.

Is neural computing about to get a lot more like the brain and, if so, why have scientists been talking about having “neural” networks this entire time?

First, the concept of a “memristor,” the basic computing unit used to create the chip in this study. Put simply, a memristor is a resistor with memory. Normal resistors have a certain level if resistance to electrical current and that’s pretty much the end of it, while the level of resistance of a memristor changes based on the voltages that have gone across it in the recent past. In other words, a memristor can both process data and store data in the form of its level of resistance. The resistance primarily dictates how much extra energy will need to be input for the resistor to hit its critical threshold and “fire” by performing its particular operation within the overall sequence.

That matters because, for one, that’s how the brain works; the brain doesn’t have a distinct RAM section, like a computer, so unlike a regular neural net it doesn’t need to fetch data out of memory just to direct the behavior of any one individual neuron. In a real brain, each neuron can nominally take care of itself, seeing to its own function and even doing some very basic modification of this function (learning), all on its own.

As a result, traditional neural networks running on traditional computers have always been roughly metaphorical on both the hardware and software levels. A.I. has already provided incredible gains for mankind, just by making use of the conceptual organization of the brain. With memristors to implement the literal organization of the brain, future brain chips could greatly magnify this impact.

That’s because there’s a very good reason that the brain evolved to function this way: when a computing process is tailored to a truly brain-like neural network, that process can be executed much more efficiently. Nothing needs to be fetched from some distant portion of the brain in order to perform basic functions, and so the overall process can be vastly simpler and more focused.

That’s the origin of the paper’s otherwise fairly opaque title: Sparse coding with memristor networks. The idea of sparse coding is that, like the brain, truly efficient code can achieve complex things with very little actual work. By actually implementing the brain’s approach, rather than replicating it, an algorithm for something like object identification can occur for far less overall neural activity than we see in a traditional A.I. neural network designed to do the same thing.

The “sparse” version of an algorithm isn’t necessarily faster than the more complex version designed by conventional machine learning algorithms (though it often is), but it can be vastly more energy efficient. It can also ensure that only the minimum necessary number of neurons are reserved to do a particular task, allowing more overall computing power and memory storage to be squeezed into a same-sized brain by avoiding bloated, brain-hogging processes.

Memristors aren’t the only way to achieve neuron-like abilities; IBM has done some very promising work with “phase change neurons,” which similarly store up information about recent stimuli and use it to determine whether and how to fire. Exotic neuromorphic processor prototypes are becoming almost commonplace, but as researchers try out all sorts of wacky new chip designs they’re finding that evolution may have beaten them to the best possible answer.