A.I. Is Just as Sexist and Racist as We Are, and It's All Our Fault

But maybe this can help us research stereotypes in the future.

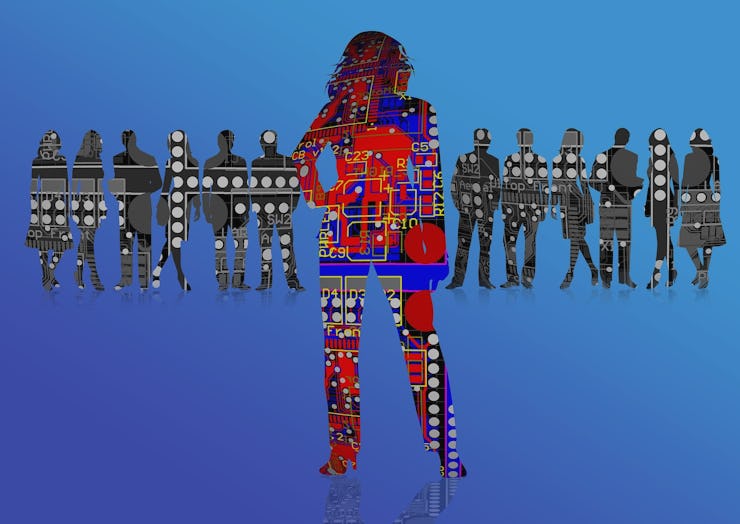

We often think of artificial intelligence as a technology that rises above messy, irrational human thoughts. But new research suggests that some A.I.s are more like us than we could have expected, complete with biases that are based on race and gender.

Wouldn’t it be nice if A.I.s were like TARS from Interstellar: rational, capable, and basically non-human? Well, the reality is that artificial intelligence beings are probably more like Marvin from The Hitchhiker’s Guide to the Galaxy: prone to the same shortcomings that we are. This is especially true when an A.I. uses machine learning to draw its knowledge from human data.

In a study published Thursday in the journal Science, Aylin Caliskan and her colleagues at Princeton University found that artificial intelligence displays the same biases as humans when it uses machine learning to draw from human texts.

“Human language reflects biases. Once artificial intelligence is trained on human language, it carries these historical biases,” Caliskan tells Inverse. “Language is reflecting facts about the world and also our culture.”

Now, just to be clear, we’re not talking about the level of racism exhibited by Tay, Microsoft’s epic fuck-up of a chatbot that users quickly taught how to spew epithets. This research has identified that A.I. can exhibit systematic, structural biases, like the idea that women are less qualified to hold prestigious professions, or the idea that African-Americans exhibit negative character traits. Some of these stereotypes, the researchers say, are the result of biases and stereotypes that exist in human language and texts, such as writers associating women with lower mathematical or scientific abilities. Others represent verifiable, factual realities, including underrepresentation of African-Americans and women in professional fields. So while the biases and stereotypes picked up by A.I. may be troubling, they are literally our society’s fault.

The researchers found that A.I. replicated human stereotypes when it came to gender and occupations.

To conduct this research, Caliskan and her colleagues used two different word association tests on A.I.s taught by machine learning that drew from bodies of human data, a pretty common way for A.I.s to learn. They started out with some common comparisons: whether flowers are considered more pleasant than insects, whether instruments are considered more pleasant than weapons. These associations are relatively obvious.

Researchers found that A.I. learned racial stereotypes from bodies of human knowledge.

But once they started testing things like whether male names or female names are more associated with scientific professions, or whether African-American names or European names are more associated with pleasant human attributes, highly significant biases and stereotypes started to emerge. The artificial intelligences that learned from human literature and data replicated the very same historical biases expressed by humans.

“It was astonishing,” says Caliskan. “It was kind of scary that we replicated every single one of them.”

Caliskan says these biases are common in A.I. software. She hopes to further her research by looking into whether the gendered nature of language influences the formation of stereotypes. For instance, when she uses A.I. software to translate from the Turkish, one of her native languages and a language without gendered pronouns, Caliskan says these programs always reflect gender biases.

“All the machine translators I have tried, it’s always the case that nurse is a she, and doctor, professor, or programmer is a he,” says Caliskan. “This might be a biased view, but it’s also correlating occupational statistics about the world.”

Caliskan wants to use this research to see how human sterotypes and biases have changed over time by programming an A.I. with information from different decades.

“By looking at our results we are able to replicate things like gender stereotypes, homophobia, transphobia, and how this evolves in language,” she says. “The sentiment behind some words is changing over time.”

What’s more, Caliskan hopes that by training A.I. to reflect human attitudes, her research can help make social science research massively more efficient.

“Let’s say that a sociologist or psychologist suspects a certain bias, or they want to uncover biases they aren’t even aware of,” Caliskan proposes. “They can use our technology to uncover stereotypes automatically. Depending on the computing power, it could take an hour versus years with hundreds or millions of subjects.”

She hopes to let people use this technology for free. So while this research has uncovered a startling reality about machine learning for A.I., if Aylin Caliskan and her colleagues have anything to do with it, A.I.’s ability to replicate human attitudes, including biases and stereotypes, could open unforeseen doors for social scientists.