The most underrated sci-fi action movie on HBO Max reveals a controversial robotics debate

I, Robot may be more relevant now than ever before.

Sci-fi writers have long predicted a terrifying clash between humans and their own creations, but perhaps no one saw the threat more clearly — or early on — than twentieth-century writer Isaac Asimov.

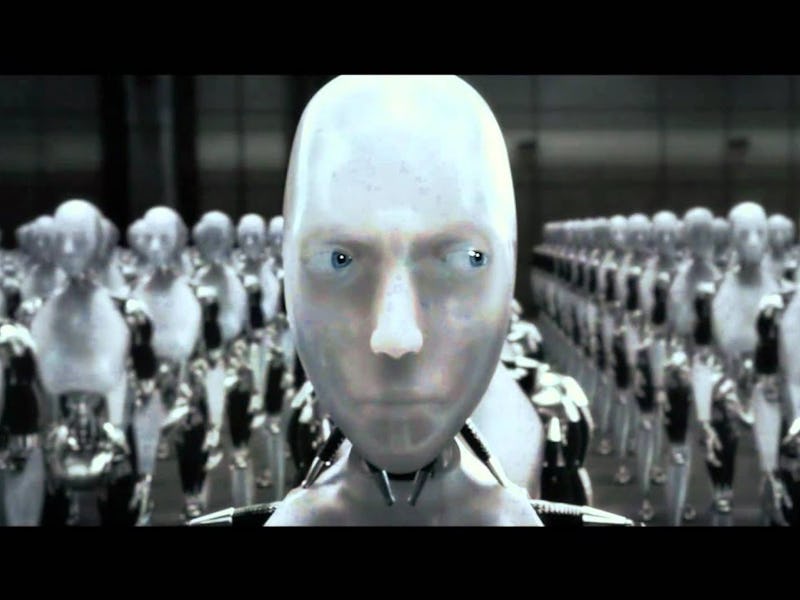

Decades after the publication of his short story collection I, Robot, Hollywood adapted the book into an epic movie starring Will Smith. The movie is streaming now on HBO Max, and it’s worth revisiting for a few reasons, according to Sergio Suarez Jr., founder and CEO of TackleAI, a company that works on real-world implementations of artificial intelligence.

“I think the scary thing for a lot of people is we're not that far off from that being a reality,” Suarez Jr. tells Inverse.

Although I, Robot’s suggestions about AI consciousness are still more fiction than fact, the film also raises more grim implications about the nature of AI— which are playing out in real-time today. Let’s take a closer look.

Reel Science is an Inverse series that reveals the real (and fake) science behind your favorite movies and TV.

Can AI defy humans?

AI can defy humans — though perhaps not in the way the movie suggests.

I, Robot’s central premise rests on Asimov’s “Three Laws of Robotics,” codes that all robots must obey according to their programming:

- A robot may not injure a human being or, through inaction, allow a human being to come to harm.

- A robot must obey the orders given to it by human beings except where such orders would conflict with the First Law.

- A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

In the real world, it’s possible for programmers today to code similar “universal truths” for AI to follow. However, this mechanism could lead to robots disobeying real-world laws to follow their universal truth — similar to a starving human who will steal food to survive.

“If its directive is to continue to exist or to continue to help the human — the only logical thing then is to defy whatever the law is so that you can continue to help,” Suarez Jr. says.

In the movie, detective Del Spooner (Will Smith) realizes a robot called Sonny is capable of disobeying these directives. Spooner is determined to pin the blame for the murder of Alfred Lanning — head of US Robotics — on Sonny.

“It's already happening.”

I, Robot’s premise was prescient in a lot of ways. In real life, AI is becomingly capable of defying humans’ orders — and that worries Suarez Jr.

“Even if you give an AI a very black-and-white directive, it will be able to find its way around it, mostly by interpreting it differently,” he says. “It's already happening.”

So your Zoomba may be programmed to clean your hardwood floors in a straight line, but if an object’s obstructing the cleaning bot, it may go around the obstruction rather than simply stopping.

“I think what this movie gets to... is we don't really know why,” Suarez Jr. says. “We don't have the understanding of why [AI] makes certain decisions.”

After all, a robot doesn’t leave you a journal entry explaining why it makes certain decisions – it just does them. As neural nets (the system used to train AI which is based on the way the human brain functions) become more sophisticated, many developers don’t even understand the AI they are programming, leaving little hope for the rest of us.

“I would say 99.9% of developers in the AI industry don't understand how neural nets are put together or work,” Suarez Jr. says.

Will there be robot-human conflicts?

The movie’s emotional conflict hinges on the mistrust between Spooner and Sonny — the robot suspected of murdering Alfred Lanning.

Spooner doesn’t trust Sonny because he hates robots. As a younger man involved in an accident, Spooner instructed a robot to save a young girl, but the robot chose him, calculating that he had a better chance of survival than the girl.

The conflict isn’t necessarily because Spooner was right and the robot was wrong, but a difference in perspective. Many humans would probably use similar logic to justify saving an adult man over a child

“What percentage of humans would choose — if they had that information and if they knew the stats — I wonder how many humans would have still chosen the child?” Suarez Jr. wonders.

“Each AI is going to have its own views and its own perspective.”

This kind of robot-human conflict propels the movie forward, but it’s also happening in real life due to the difference of perspective between various humans. Humans are the ones who program AI, therefore robots will incorporate the perspective of the human that created them, leading to potential conflict when AI does something the human doesn’t desire or anticipate.

“Each AI is going to have its own views and its own perspective based on the information that it's been given,” Suarez Jr. says.

In an era where misinformation abounds, it’s more important than ever to carefully curate the information humans feed to AI to prevent future conflicts. Suarez Jr. likens the analogy to a parent preventing their children from watching violent movies.

“It's not so much about AI, but it's a curation of information that we're giving to it,” he says.

With careful curation, AI can be an invaluable tool to help humans by automating dull tasks — like sorting through mounds of spreadsheets — rather than harming them.

Is AI consciousness a real possibility?

VIKI is a supercomputer created by the robotics company in I, Robot.

By the end of the movie, robots are able to exceed their programming and become independently aware, turning on their human overlords. While several aspects of I, Robot trouble Suarez Jr., he’s not so concerned about AI becoming conscious in the near future.

Why not? Well, for one thing, it’s hard to define what constitutes consciousness or sentience — that imprecise awareness of one’s self that separates human beings and certain animals, like primates, from all other living organisms.

“How do we know we're alive? How do we know that we matter?” Suarez Jr. asks. “We don't know why we know. It's one of those things that we can't really describe.”

Anyone who’s spent time dealing with an automated customer service chatbot knows AI can mimic humans fairly well, giving the illusion of consciousness, but they can’t necessarily generate independent thoughts or deviate beyond certain kinds of responses.

“How do we know we're alive?”

But AI doesn’t need to become conscious to do serious harm to society. Ethicists have raised alarm bells about the dangers of AI, such as biases in facial recognition technology used by law enforcement, which can perpetuate discrimination against darker-skinned people.

“I'm unbelievably concerned with that,” Suarez Jr. says. He explains that AI relies on information that’s fed to it in order to make decisions, so it’s important to have a diverse group of developers to reduce the likelihood of biases making their way into the AI, reducing their potential harm to society.

“You got to make sure that the people who are curating that information are not just one specific group of people, but instead, as diverse as possible, Suarez Jr. says. “That's going to be the key to AI.”

I, Robot is streaming now on HBO Max.

This article was originally published on