There's a 5% Chance A.I. Leads to Extinction-Level Disaster

Time to start paying attention?

Experts are generally optimistic about the potential of A.I. but also aware of major risks, according to a big new survey.

Precisely 352 experts (who recently published at A.I. conferences) were asked to predict the future, in a paper from researchers at Yale, Oxford, and the Future of Life Institute. While estimates varied greatly, the median result points to a 50 percent likelihood of “high-level machine intelligence,” where unaided machines will accomplish every task better and more cheaply than human workers, by 2061.

As for what effect HLMI will have on humanity, researchers said on average there was a 20 percent chance it could be extremely good; 25 percent it would be on-balance good; 20 percent it would be neutral; 10 percent it would be bad; and — here’s the scary part — 5 percent it would be extremely bad (e.g., human extinction).

Facing this risk, 48 percent of respondents said society should devote more resources to research on minimizing the risks of A.I. (only 12 percent said we should deprioritize this research).

Whatever happens, it will likely happen fast: 65 percent of respondents said progress was happening faster in the second half of their careers than the first.

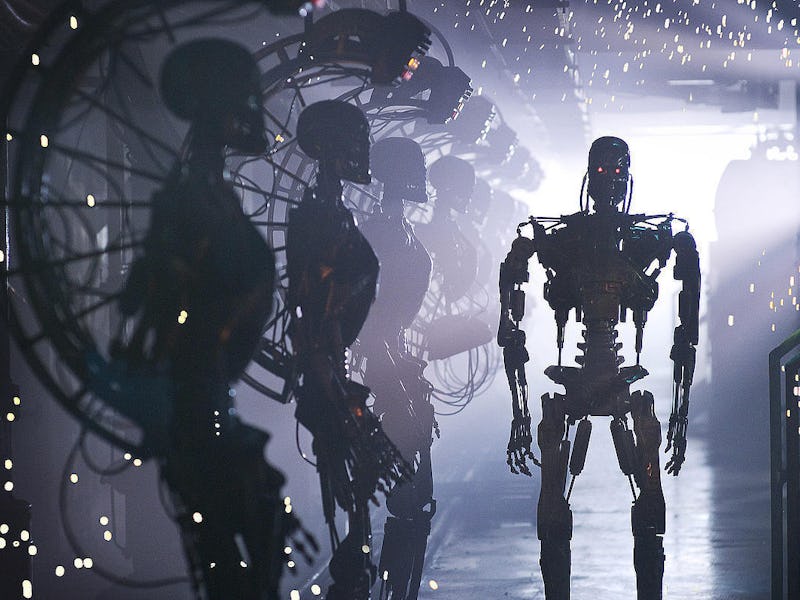

Elon Musk and others warn that military drones could lead to an autonomous arms race.

While the respondents didn’t explain how A.I. could go horribly wrong, we can look to warnings from the likes of Elon Musk and Stephen Hawking. One worry those two cited was an arms race in autonomous weapons, which “require no costly or hard-to-obtain raw materials [and so would] become ubiquitous and cheap for all significant military powers to produce … [and would inevitably] appear on the black market and in the hands of terrorists, dictators looking to better control their populace, warlords wishing to perpetrate ethnic cleansing, etc.”

Musk offered A.I. risk as a major reason to boost human intelligence through neural links: “A.I. that is much smarter than the smartest human on Earth — I think that is a dangerous situation.”

But, hey, don’t forget about all the cool things A.I. will do in the coming years. And remember, there’s a 20% chance this will all turn out extremely well.

Don’t miss: The Singularity Could Be Closer Than You Think