Scientists just taught a robot to complete this deceptively simple task

A team of researchers successfully programmed a robot to dress a mannequin.

Despite fears that robots will conquer the world, they currently, with a few exceptions, often have problems with basic tasks such as opening doors or climbing stairs. Now, in a new study appearing online April 6 in Science Robotics, scientists have taught a robot the deceptively simple chore of dressing a mannequin.

One of the biggest challenge robots face is dealing with soft, flexible materials such as cloth, because it changes shape upon touch and motion.

Before robots can truly prove useful in medical settings, they need to be able to handle fabric when caring for patients, including helping people get dressed — more than 80 percent of patients in skilled nursing homes need help with dressing, and of all the chores related to daily living, dressing imposes the highest burden on caregiving staff and the lowest use of assistive technologies.

Teaching a robot how to handle cloth often requires extensive computer simulations, which often fails to fully mimic how fabric behaves in the real world. On the other hand, letting robots learn by watching real videos of textiles in motion usually proves slow, as the robots alone do not understand the cloth they see in the videos, so researchers need to help them interpret such data.

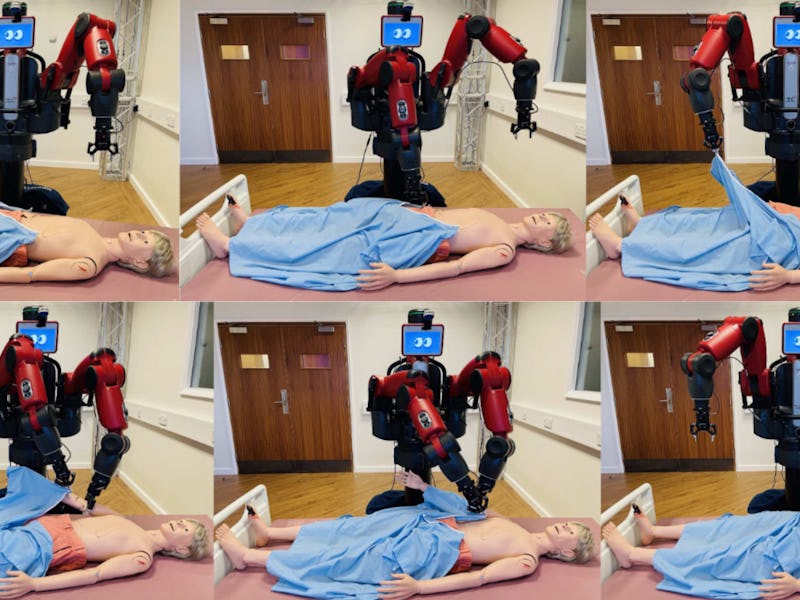

The system in action.

WHAT DID THE SCIENTISTS DO? The researchers developed a series of steps to help a two-armed robot dress a mannequin lying in bed in a hospital gown. Breaking the procedure down step by step helped the machine avoid problems that could stop it dead in its tracks — for instance, they taught it how to separate and grasp just one layer of the gown, instead of grabbing multiple layers at once in ways that might end up blocking openings such as sleeves.

The scientists also found a way to help bridge the gap between simulations and reality when it came to cloth. They generated many computer models of fabric that randomly varied in terms of color and texture and then had the robot compare and contrast these simulations to video of the real garment and choose which virtual cloth was the most similar.

A diagram of how the breakthrough works.

"I really like the approach they found to maximize the learning from simulation demonstrations," Júlia Borràs Sol, deputy director of the Robotics Institute in Barcelona, who did not take part in this research, tells Inverse. "We will definitely try to reproduce it in our lab to see if we improve what we can learn from our own simulations."

The researchers had the robot grasp a back-opening hospital gown hung on a rail around the collar, fully unfold the gown, navigate around a bed holding an 18-kilogram (40 pounds), 174-centimeter (five feet, eight inches) medical training mannequin imitating a patient who has completely lost the ability to move their limbs, lift up and dress the mannequin's arms, and then spread the gown to cover the mannequin's upper body.

WHAT DID THEY FIND? The robot learned to successfully dress the mannequin more than 90 percent of the time during 200 trials.

"Advances in computer vision and robotic manipulation are enabling assisted dressing," Borràs notes in a commentary on the new work.

WHAT'S NEXT? Future research may focus on helping robots control the shape of cloth after grasping and understanding how garments might deform.

"This is important to be able to correct situations when something goes unexpectedly," Borràs says.

In the future, robots may not only dress patients, but help doctors and nurses don their personal protective equipment as well.

"That has been especially relevant during the pandemic, where nurses spent a lot of time putting on such personal protective equipment and often needed the assistance of other nurses to do it," Borràs says. "If a robot can provide such assistance, we could optimize nurses' and doctors' time."