Siri Google Voice Comparison: Did Apple Clap Back Against Smart Compose at WWDC?

Apple and Google are working to make their machines smarter.

It was the battle of the brains on Monday, as Apple took to the stage at the Worldwide Developers Conference to show its super-smart software could compete with Google. The two companies are locked in an intense battle particularly in the domain of artificial intelligence, with both companies developing their virtual assistants to provide answers faster than ever before.

As part of Gmail’s redesign last month, Google unveiled a new application of these A.I. tools to make composing and sending emails faster. The company unveiled Smart Compose at the Google I/O keynote address, with CEO Sundar Pichai explaining how it offers what is essentially autocomplete suggestions for sentences.

As expected, Apple’s announcement featured a number of new features designed to help Siri better compete with Google’s AI offerings like Google Voice and predictive text. Here’s how they stack up for now.

- Read More of Our WWDC Coverage Below:

- WWDC 2018: 4 Ways Apple Will Help You Unplug and Fight Smartphone Addiction

- WWDC 2018: Apple iOS 12 Is Here With Amazing New iPhone Updates

- WWDC 2018: 5 Tech Firms Apple Just Tried to Kill With iOS and macOS Mojave

WWDC 2018: Siri Shortcuts Are Getting Better

One area where Apple more explicitly took on Google was was through a number of improvements to its Siri voice assistant, which underpins the $349 HomePod smart speaker that launched earlier this year.

As expected, the new updates to Siri means the system can make smarter suggestions. Shortcuts is a new addition that enables users to design their own commands to save time: as an example, users can state “coming home” to have the thermostat set to an acceptable level and the music playing when they walk in the door.

WWDC 2018: macOS Mojave and A.I.

With Apple’s next Mac operating system, macOS Mojave, the company plans to make Mail work smarter. The app will now suggest the correct inbox for messages when selected, enabling users to sift through their mail faster. There’s also improved support for emojis, and working with files is easier thanks to improved Quick Look options with the ability to send files directly.

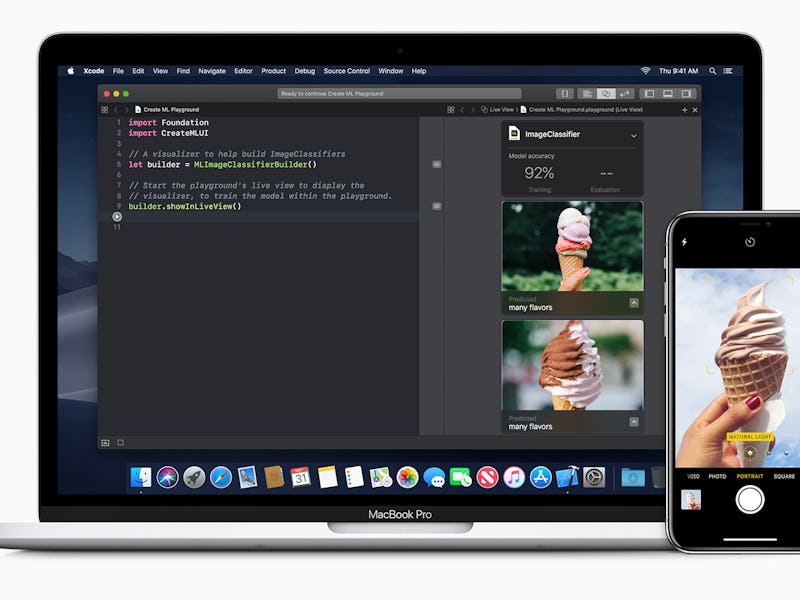

As for a Smart Compose competitor, Apple avoided taking Google head-on, instead encouraging third parties to build their own machine learning-powered apps with “Core ML 2,” a developer framework that Apple uses internally across its product lines. Updates enable developers to try out their creations in the Xcode developer interface. A Natural Language framework allows app makers to analyze text and run through Core ML models. Apple isn’t making “iSmart Compose,” but it is giving developers tools to make their own.

macOS Mojave.

WWDC 2018: iOS 12 and A.I.

On the iPhone side, Apple has made little change to its predictive texting model first introduced with iOS 8. This offers users a choice of three options from a bar placed along the top of the keyboard, allowing users to touch the next word to complete a sentence faster. It’s fun, simple, and it’s inspired bouts of awful slam poetry.

Beyond the keyboard, Apple is extending those same Core ML improvements to its smartphones. It’s also building on the photo analysis tools that enable users to search through their images quicker, with automatically-applied tags depending on the content. With iOS 12, users will receive suggestions for events, people and places as they type.

Apple Google A.I. Comparison: Who’s Winning?

Apple’s new announcements are not quite as impressive as Google I/O’s demonstration of the Assistant calling a business and booking an appointment automatically, but Apple is quietly forging its own A.I. path by enabling more features that leverage internal processors without connecting to the cloud, through the likes of Core ML and other tools.

Borui Wang, the CEO of Polarr and developer of the app Album Plus, told Inverse last November that offline A.I. is “going to be the new buzzword for the next decade.” Whether the future lies with big servers calculating answers, or if Apple’s privacy-focused offline efforts prove a better route, remains to be seen.