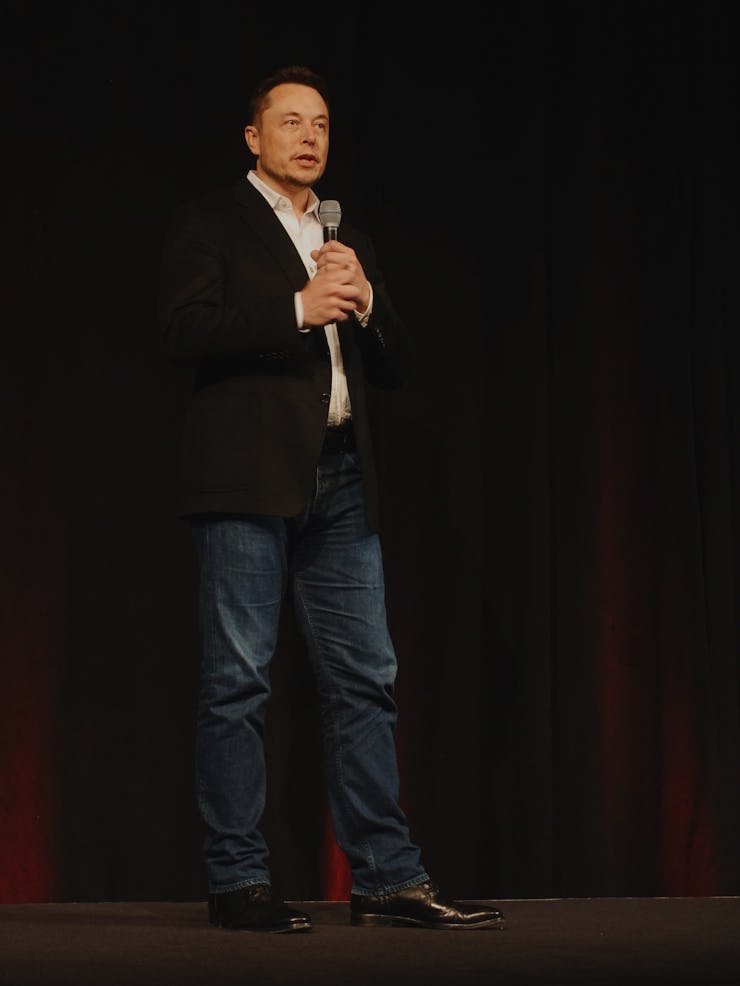

"Deep A.I.," Not Automation, Will Doom Humanity, Says Elon Musk

There's an important distinction.

Twitter is not exactly an ideal place to unpack large ideas and theses, but it has nevertheless been something of a goldmine platform for tech luminaries to hint at big ideas and theses. While Elon Musk, the famed Tesla and SpaceX CEO, has never been shy to express concerns about how A.I. might one day lead to the downfall of human beings, he provided a small insight last Friday that his fears shouldn’t preclude the rise in automation — a field of technology distinct from A.I. which many people often conflate.

A Twitter user sent Musk a Business Insider post about Tesla’s new “summon” feature for its cars. It’s a nifty tool by itself, but the user — and many others, probably — couldn’t help but wonder if this was just another inadvertent step toward watching a future army of self-driving cars turn on their human masters to wipe out the species and become the dominant intelligence on the planet. Was this just the first sign that we’re heading towards a “robotic apocalypse,” she asked Musk.

Musk, it seems, saw the tweet, and had a pretty pithy response:

For techies, automation and A.I. are different things. Automation essentially refers to the ability of machines to perform programmed functions automatically, without a human on the controls. Robots in a manufacturing facility fall under this realm. Smart-home systems that control temperatures or electricity usage automatically are another example. Self-driving vehicles are a kind of advanced version of automation, which operate autonomously, but with under a single purpose of getting from point A to point B safely and efficiently.

See, automation systems cannot simply think for themselves. They respond automatically to changing stimuli from the environment, and adapt in order to continue to fulfill their function — and they are to some extent designed to learn and get better as they work. But they are not engaged with active learning the way humans are.

A.I., and especially deep A.I., are fitted with the types of base algorithms that are specifically designed to absorb information and process it to become more intelligent. A.I. aren’t programmed to solve a singular problem — they are systems that work as problem solvers themselves.

That’s why people like Musk have expressed real concerns about A.I. — because it’s pretty conceivable those systems could learn fast enough to spiral out of the control of humans. If automation systems start to attack humans, it’s not because they are pulling off an uprising — it’s because they are bugged, or they have been hacked by hostile parties. A.I., on the other hand, could actively teach itself an idea that humans are a threat, and that’s where the real trouble comes in.

(It’s probably worth remembering as well — Musk’s business ventures rely on automation, especially when it comes to manufacturing Tesla Cars, SpaceX rockets, and whatever else his future companies will make. It’s in his best interests to make sure consumers understand the difference.)

Automation won’t be taking over the world anytime soon. But automation might be after your job — that’s a story for another time.