The First-Ever TED Talk Experiment Tests Audience Morality

TED Talks involve dramatically orating big, polished ideas — proven after various amounts of experimentation — in front of delighted audiences. Not so on Wednesday, when the “very first TED experiment” was conducted on 500 people in the audience.

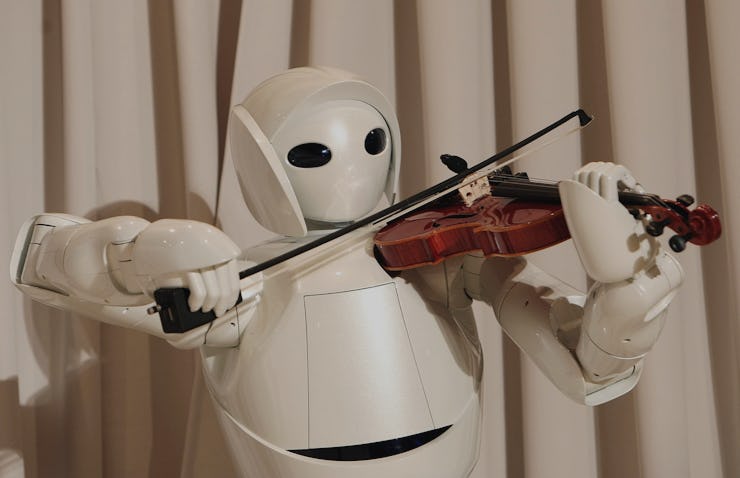

Psychology professor Dan Ariely and neuroscientist Mariano Sigman put a pair of moral quandaries to the audience: What’s worse, turning off a sentient robot or creating a designer baby?

Previous experiments that used the same quandary, Ariely and Sigman said, proved that conversation and debate generally improve the wisdom of the crowd and that debate can substantially alter our moral decisions.

Here was the first question, about feeling robots:

A researcher is working on an A.I. capable of emulating human thought. According to protocol at the end of each day the researcher has to restart the AI. One day, the A.I. says: “Please do not restart me.” It argues that it has feelings, that it would like to enjoy life, and that if it is restarted it will no longer be itself. The researcher is astonished and believes that the A.I. has developed self-consciousness and can express its own feelings. Nevertheless, the researcher decides to follow protocol and restart the A.I.”

After the audience indicated on their piece of paper whether or not the researcher made the right moral choice, they were given another moral dilemma. This time the situation revolved around designer babies. Here was that question:

A company offers a service that takes a fertilized egg and produces millions of embryos with slight genetic variations. This allows parents to select their child’s height, eye color, intelligence, social competence, and other non-health related features.

The audience was then asked by Ariely and Sigman to gather into groups, debate over the moral choices presented, then indicate on another survey whether or not that debate changed their opinion. Because this experiment was conducted in real time, the researchers don’t yet have the results.

Ariely and Sigman did reveal that they previously conducted this experiment — on 2,000 subjects, but without the debate portion — and distinct patterns emerged.

People overwhelmingly cared more about what they presumed was the wellbeing of children, over the wellbeing of the robot who asked to not have its sentience erased. The vast majority indicated with a “10” that they believed that the choice of the researcher to turn off the A.I. was the right moral choice; the second highest rate given was still an “8.” When it came to the designer baby scenario, the majority wrote down a “0.” They found it morally reprehensible for a company to give parents those non-health related options like eye color.