Stephen Hawking Just Opened an A.I. Lab That Will Research Droids

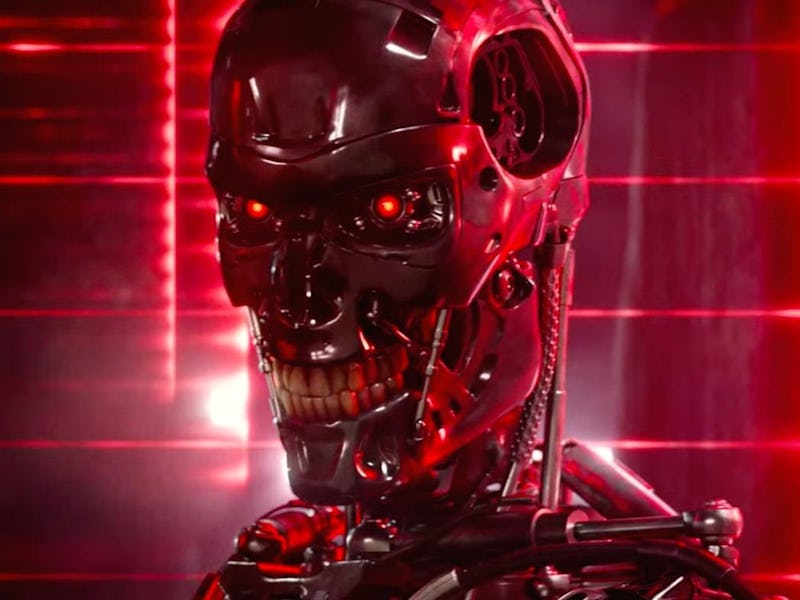

Professor Stephen Hawking took the wraps off an A.I. research center at Cambridge University on Wednesday which, among other areas, will research Terminator-style army droids. The Leverhulme Centre for the Future of Intelligence (CFI) wants to guide the development of A.I. to ensure that it is beneficial to society. In the case of military applications, the CFI will consider how intelligent weapons should be regulated by policymakers.

“Perhaps with the tools of this new technological revolution, we will be able to undo some of the damage done to the natural world by the last one — industrialization. And surely we will aim to finally eradicate disease and poverty. Every aspect of our lives will be transformed,” Hawking said at the center opening, attended by the AFP. “In short, success in creating A.I. could be the biggest event in the history of our civilization.”

The center is a collaborative effort between Imperial College London, Cambridge and Oxford Universities and the University of California, Berkeley. The CFI will research how A.I. can both benefit and harm humanity, bringing in experts from all areas of life to consider how new technologies may impact their field.

Professor Stephen Hawking.

Military robots are an area of increasing interest. In July, a police robot killed a suspect for the first time after Micah Xavier Johnson open fired on police officers during a Black Lives Matter protest, but it is unlikely the bot acted autonomously. The Pentagon is researching A.I.-powered versions of these bots, but the current outlook is to always have a human make the final decision over whether to kill.

Ethicists are currently discussing how best to regulate A.I., like the ones powering these robots. The British Standards Institution (BSI) has released its own guide, but the IEEE Standards Association is working on an internationally-focused set of guidelines due to be released in December. It’s not clear how the CFI’s work will fit into these efforts, but the aim to involve policymakers suggests the center wants to help produce legal regulations, as opposed to the BSI and IEEE, which are more focused on “best practices” outlines for developers.