Neurorobotics Is Bringing the "Efficiency" of the Human Brain to A.I. Robots

Getting a machine to think like a human is hard enough. Getting a machine to be as power efficient as a human is even tougher.

Time and time again, the leaders of robotics and A.I. make plain what their ultimate goal is: to develop a machine system that’s capable of emulating the human brain. In fact, that’s one of the core mission tenets behind the Human Brain Project — a 10-year, $1.4 billion endeavor funded by the European Union that seeks to move neuroscience, computer science, and brain-related medicine forward in a very profound way.

One subproject of the HBP folds under the term “neurorobotics” — where machines are built to essentially simulate neural network processes. You might have heard of this work under the phrase “neural network”, and in many ways, those worlds overlap. But neurorobotics goes one step further, and looks to not just mimic the kinds of the things human brains are capable of, but also mimic the same power efficiency.

See, mammalian brains are more incredible than most people realize. They have what Florian Rohrbein, the managing director for HBP’s neurorobotics project, calls an “extreme power- and space-efficiency.” They can compute an incredible amount of sensory information and work through a ton of different data without suffering from the kinds of problems physical computers run into — and they do it all in a glob of tissue that fits snugly in a skull the size of a playground ball. Getting such a system to work pretty much opens the possibility of fitting a supercomputer into the head of a machine made of wire, metal, and plastic.

“It’s high risk, high gain research,” Rohrbein told attendees at the RoboUniverse 2016 meeting in New York City on Monday. He believes that neurorobotics can play a pivotal role in the development of new kinds of prostheses that are more in tune with human cognition. Even better, neurorobotics might even inspire a new wave of robots that better mimic physiological and behavioral patterns found in animals, like multiple robots moving and operating as a cogent swarm.

Ultimately, the goal isn’t for these types of machines to replace human thinking, but rather to support and augment what people are able to do. Rohrbein used an example of images to make his point. First, he showed in consecutive order two images that showed what seemed to be just random static spinning on a broken television. Then he threw up two images that were starkly different: a distant photo of a tropical island at sea and a photo of two zebras colliding with one-another.

The big reveal was that the former pair of the images — the static ones — were actually more different than the latter pair, at least when you deconstruct them bit by bit. “Our brains are adapted to the perception of surroundings,” said Rohrbein. In other words, our minds are programmed to look for sweeping differences rather than minute ones. There are so many things we don’t really see in the world, and as a result, “they are lost,” said Rohrbein. A neurorobotic machine, by contrast, could make the distinction with little trouble.

The neurorobotics team has made some good progress so far. In its long-term goal to create a closed-loop system, it’s just released its software platform for open source collaboration.

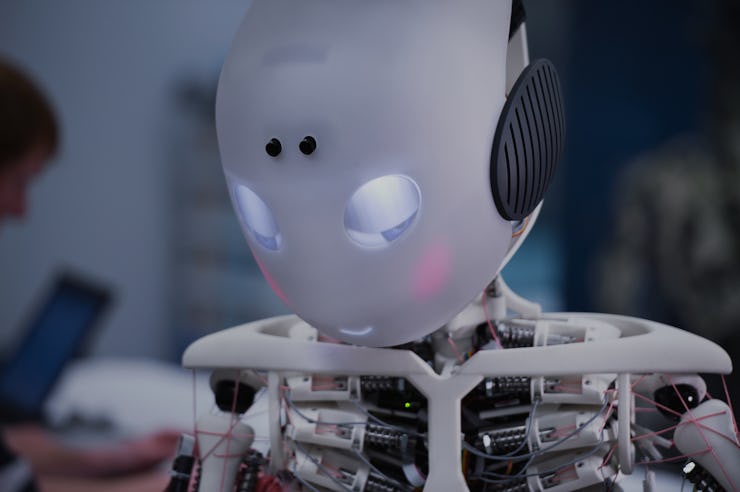

The team, in collaboration with Myorobotics, has developed a slew of autonomous, musculoskeletal robots based on the neurobotics architecture it’s worked on, such as arms and other appendages. The latest breakthrough is Roboy, a humanoid robot capable of interacting with people and accomplishing minor tasks like walking and shaking hands — small feats for us, but giant leaps in the world of robotics.

Rohrbein says he and the team want to be able to successfully emulate 10 percent of the human brain within the next few years. “We want better brains for a smarter system,” — and Rohrbein and team want to do it while cutting the costs of the technology across the board. With how much money is being poured into their work, they stand a chance to succeed.