Promising New MIT Internet Browser Tech Polaris Loads Websites up to 59% Faster

"We are currently thinking about the best release strategy."

Two Ph.D. students and a professor from MIT’s Computer Science and Artificial Intelligence Laboratory, joined by a professor at Harvard, have released a new method for loading websites faster. The system, dubbed Polaris, loads most pages 34 percent faster than their current loading speeds. Pages in the 95th percentile — those that are the most complex, like that of the New York Times — load 59 percent more quickly.

This is a significant accomplishment — not only because it makes an already fairly painless experience that much more painless. The paper notes what better speeds mean for the websites themselves:

“Extra delays of just a few milliseconds can result in users abandoning a page early; such early abandonment leads to millions of dollars in lost revenue for page owners. A page’s load time also influences how the page is ranked by search engines—faster pages receive higher ranks.”

The paper’s lead author, Ravi Netravali, explained to Inverse that his team’s “main goal is widespread adoption by many websites.”

“As it stands, to use Polaris, a site must generate a fine-grained dependency graph (automatically, using Scout) and respond to client requests with the graph and Polaris JavaScript scheduler.” Netravali wrote in an email. “Browsers can treat this response as a standard JavaScript object (no browser modifications are required) and the page will load completely (and efficiently).”

Netravali said that another goal of his team is to incorporate Polaris into existing browsers like Chrome, Firefox, and Edge. “This would make adoption even more widespread. So, we are currently thinking about the best release strategy to make this happen.”

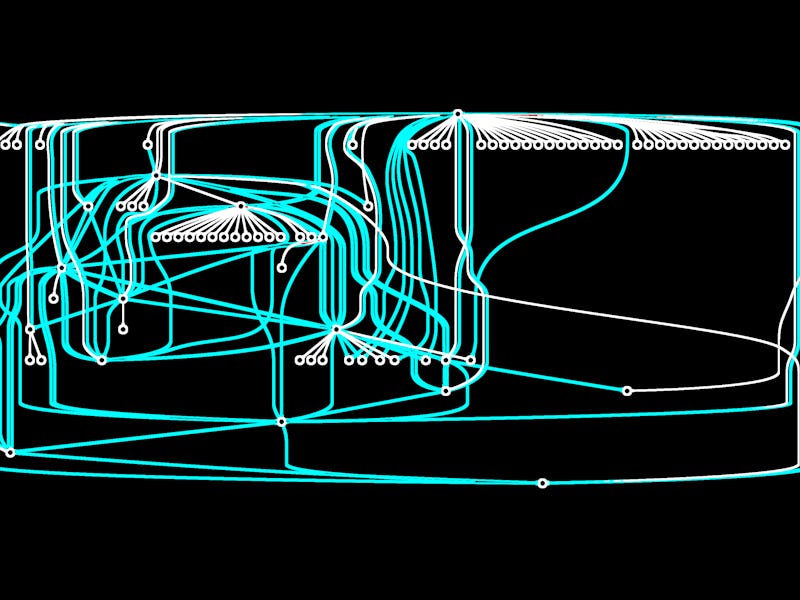

Websites that are especially complex benefit most from Polaris. The researchers tested their system on 200 sites. (The most complicated site in this group was weather.com; and ESPN.com also enjoyed significant improvements in page-load time.) These sites have intricate “dependency graphs,” which graphs Polaris, and in a sense, demystifies and prioritizes.

These benefits showcase what Polaris does best: optimizes how browsers understand websites. Harvard professor James Mickens likens it to traveling. A traveler who knows his or her itinerary — the entire list of cities and countries he or she must visit — ahead of time can craft an efficient journey. But a trip that resembles a scavenger hunt may be very inefficient: you’ll go to one city, then another, only to learn it would’ve been easier to go to the next city on your way from the first to the second.

“Performance with Polaris depends on both network conditions and the structure/complexity of a web page,” Netravali explains. “Regarding network conditions, gains will be largest when delays are high (e.g., cellular networks). With respect to complexity, gains increase as pages have more and more objects (especially dynamic objects that can lead to subsequent object fetches). So, for example, a site like www.apple.com does not see much gains with Polaris since the site is quite simple (it has few objects, mostly images, so request ordering doesn’t matter much). Such sites are very uncommon today (and the trend is that they, too, will become more complex in the future). Sites at the median are more like ESPN’s homepage. These sites have far more objects and do benefit from Polaris as certain objects have higher priorities over others. Then, at the 95th percentile, there are sites like weather.com and nytimes.com which have many objects (100s) and really need intelligent request scheduling, which Polaris does.”

The most complex sites, like ESPN.com and Weather.com, benefit the most. (RTT stands for "round-trip delay time.")

Hari Balakrishnan, the MIT CSAIL professor on the project, points out that the technology will not be forced on anyone, but presents an opportunity. “Sites that want acceleration can use Polaris without browser modification,” he said. “It is up to the content provider sites to decide to use it.”