Google is Bullish About Using A.I. To Combat Hate Speech on YouTube

Big tech companies that host troves of user content can make their own decisions about what to allow on their sites. But, if nothing else, they are still bound by the laws different countries have on regulating hate speech. Google argues that’s a task better suited to artificial intelligence.

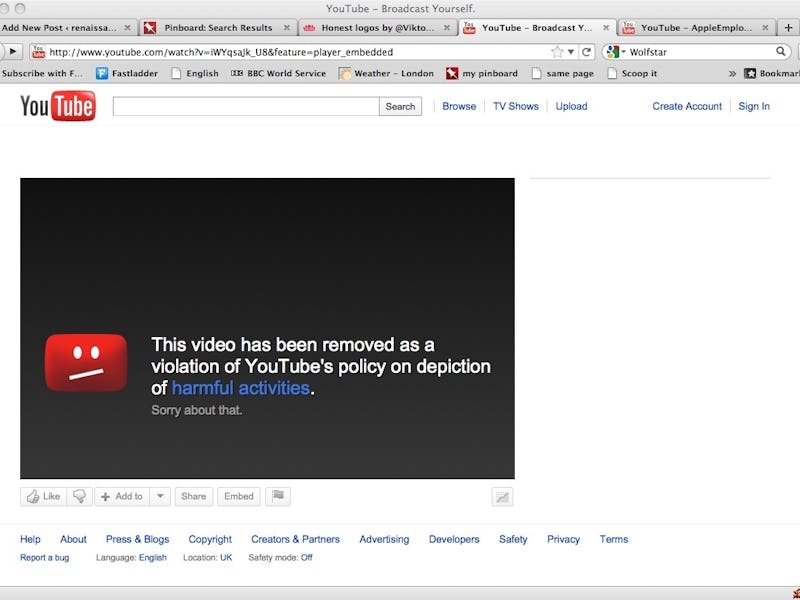

When it comes to excising hate speech videos and illicit content from YouTube, Google plans to go hard on developing automated programs and machine learning to scan the world’s second most popular website for extremist and controversial materials. While human moderators take care of the bulk of this work right now, YouTube is already unrolling A.I. systems to handle these ditties, and machines will take on more of that work as time passes.

About a month ago, a YouTube spokesperson acknowledged hate speech was becoming a bigger issue. The company hopes A.I. can be the solution to cracking down on the dissemination of extremist speech across the website.

“Our initial use of machine learning has more than doubled both the number of videos we’ve removed for violent extremism, as well as the rate at which we’ve taken this kind of content down,” a YouTube spokesperson told The Guardian. Over 75 percent of the videos we’ve removed for violent extremism over the past month were taken down before receiving a single human flag.”

About 400 hours of content are uploaded to YouTube every minute. Moderating all that video requires a highly efficient system, which makes machines an ideal solution.

Of course, the big question is exactly what standards YouTube plans to enforce. According to The Guardian, the company doesn’t necessarily have to do anything about content that is objectionable, but not illegal. Those kinds of videos will not be taken down, but rather made available in a “limited state.” It’s unclear exactly what that means, but the company spokesperson told The Guardian, “The videos will remain on YouTube behind an interstitial, won’t be recommended, won’t be monetized, and won’t have key features including comments, suggested videos, and likes.”

Moreover, YouTube is forced to censor different types of videos depending on where the user is accessing the site. Trained algorithms will need to be customized according to different countries’ standards and laws.

One big concern that seems to go unaddressed is whether these A.I. censorship programs may go too far — needlessly scrubbing content which violates no laws or speech standards, or if they may perhaps be hijacked for nefarious purposes. Those issues, of course, permeate the rest of the internet as well, and it’s unlikely YouTube and Google will be able to fully escape such concerns.