A New Algorithm Will Help Take the First Picture of a Black Hole

How to see something that is defined as unseeable.

Actually looking at the black hole sitting in the center of the Milky Way is like seeing a grapefruit-sized shadow wearing a glow ring on the Moon — pretty close to impossible.

But a new algorithm is changing that: “We’re trying to take the first image of a black hole,” Katie Bouman, a Ph.D. candidate in electrical engineering and computer science at MIT, tells Inverse. Her algorithm and the data that will be gathered by the Event Horizon Telescope project could take this impossible picture within a year. Although difficult, actually viewing a black hole is one of the final tests of Einstein’s theory of general relativity, and could determine if our understanding of physics breaks down on the event horizon of a black hole.

Either Sagittarius A at the center of the Milky Way, or the supermassive black hole in the M87 galaxy could be the first black hole on camera in the spring of 2017, when the Event Horizon Telescope project goes live. A single telescope powerful enough to see a black hole would need a disk 10,000 kilometers across — nearly the size of the Earth. So the Event Horizons Telescope project is using six telescopes across the globe to gather radio images for six to twelve hours at a time. The rotation of the Earth turns the six telescopes into six streams of data, as if the whole Earth was a telescope. You get coverage of radio frequencies like a time-exposure shot of the night sky. But just like filming the night sky, there are still a lot of blank spaces in the footage without light.

And this is where Bouman’s algorithm comes in: it transforms the sparse radio wave data into a single image. The inverse-learning algorithm, CHIRP (for Continuous High-resolution Image Reconstruction using Patch priors), looks at hundreds of images and crops them into small patches. Using these patches like puzzle pieces, the algorithm tries to cluster and describe similar patches. From these patch models, CHIRP then reconstructs the image.

“Even though, on a whole, images are very different,” Bouman says, “little pieces of them are very similar.” She tested her algorithm by using different pieces of an image, and seeing if it reconstructed the same image. She created her own database to test and compare similar algorithms to find the best models. It also didn’t matter if she used space images, or normal pictures to train the algorithm. Once the algorithm could cluster patches, no matter what image patches it viewed, it could produce very similar images. “If we get a very similar image under different types of priors,” Bouman says. “That means that what we’re seeing is most likely real.”

Imagine you were sitting alongside a pond and saw ripples made by frogs sitting in the center, but you couldn’t see the frogs. You could use this algorithm, and data about the ripples from a bunch of people sitting around the pond, to turn those ripples into a picture of the frogs. It might sound insane, but this is what Bouman’s algorithm can do to a black hole when combined with the Event Horizon Telescope project’s data.

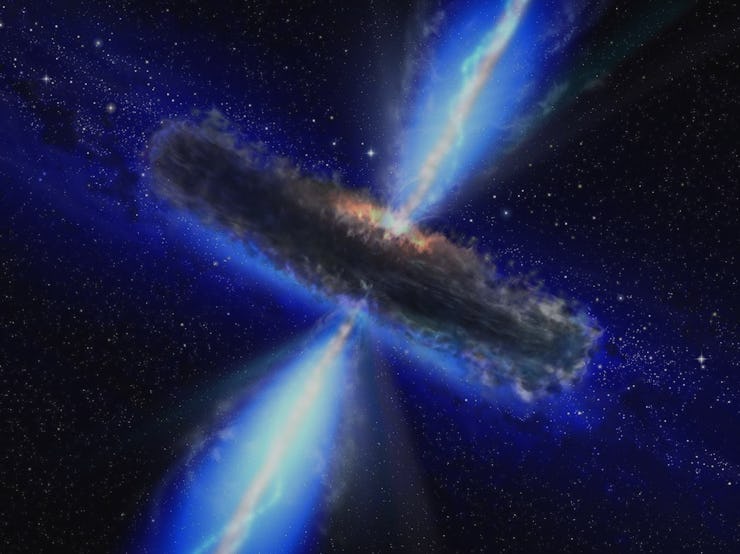

The closest black hole, Sagittarius A, at the center of the Milky Way, is 4 million times the mass of the sun and would fit inside of Mercury. However, because it is 26,000 light-years away, taking a picture of it is like trying to see a grapefruit on the moon. And since light can’t escape a black hole, the researchers are looking for the bright ring of super-hot, super dense, glowing photons as they’re squeezed onto the event horizon of black hole.

It’s like a glow-ring around a grapefruit-sized shadow. And it’s one of the final frontiers of Einstein’s theory of general relativity. All other areas where we have been able to test Einstein’s theories about gravity have been in areas where gravity is relatively weak, says Shep Doeleman, the director of Event Horizon Telescope project. A black hole is the extreme, he says, and “it’s where things get extreme that things tend to break down.”

Although LIGO has heard two black holes colliding, we still don’t know if Einstein was right about gravity or not, he says. Because Bouman’s algorithm is so sophisticated, it can take the imperfect data available to the Event Horizon Telescope project and fill in the space with high accuracy. Doeleman says “the ultimate goal of the Event Horizon Telescope project is to do what we’ve been taught is completely impossible — to see something that by definition can’t be seen.”