Privacy

Apple's impending photo hashing update sounds like a privacy horror story

“All it would take to widen the narrow backdoor that Apple is building is an expansion of the machine learning parameters to look for additional types of content, or a tweak of the configuration flags to scan, not just children’s, but anyone’s accounts.”

Electronic Frontier Foundation

Earlier this week, word got out that Apple would soon be introducing NeuralHash, a new, user-end photo hashing program billed as a means to better identify and report child sexual abuse material (CSAM) to law enforcement. Those rumors were confirmed by the company not longer afterward, with Apple announcing that NeuralHash would be part of the security updates within its upcoming iOS 15 and macOS Monterey release due sometime in the next couple of months.

In a nutshell, NeuralHash will employ complex machine learning software to compare any image uploaded to iCloud Photos or iMessage against known illicit material identification traits. If an image is flagged, it passes through multiple reviews (including human employees) before potentially alerting law enforcement agencies. The details of the new security features can be found in a 36-page technical summary published by Apple earlier this week.

Although unveiled as means to combat universally reviled subject matter, the potential long-term implications of such a program have many privacy advocates and organizations extremely worried. As the Electronic Frontier Foundation explained in a blog post yesterday “All it would take to widen the narrow backdoor that Apple is building is an expansion of the machine learning parameters to look for additional types of content, or a tweak of the configuration flags to scan, not just children’s, but anyone’s accounts.”

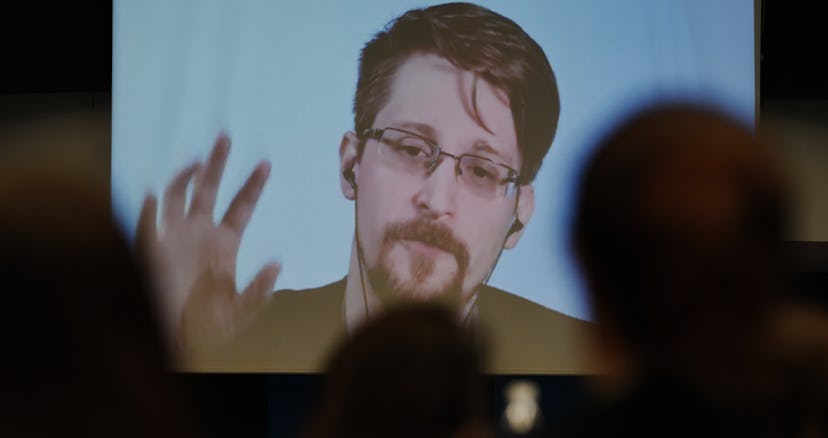

Meanwhile, Edward Snowden had some thoughts of his own about the ballooning issue: “Apple says to ‘protect children,’ they're updating every iPhone to continuously compare your photos and cloud storage against a secret blacklist. If it finds a hit, they call the cops. iOS will also tell your parents if you view a nude in iMessage,” he tweeted, referring to another aspect of Apple’s new security updates regarding new parental control notifications. He also got in a pretty good meme jab, if we’re being honest.

One in a trillion — Apple, for its part, claims there is only a one-in-one-trillion chance of its NeuralHash system producing a false positive, and that there is an appeals process already in place for users who wish to dispute any results. Still, Sarah Jamie Lewis, executive director of the Canadian research non-profit, Open Privacy, explains, the real worry is what the long-term effects and normalization will mean for everyday citizens. “You can wrap that surveillance in any number of layers of cryptography to try and make it palatable, the end result is the same. Everyone on that platform is treated as a potential criminal, subject to continual algorithmic surveillance without warrant or cause,” they tweeted yesterday.

It seems unlikely that Apple will change its mind on NeuralHash’s rollout in the coming months. Knowing that, coupled with how difficult it will be to close that Pandora’s box of privacy issues, means that we are truly entering into unchartered waters for digital privacy.