Culture

No, Pete Buttigieg probably isn't getting special treatment in your Gmail inbox

A new story invokes fear, uncertainty, and doubt about how Google manipulates political emails, but it falls short of proving its point.

A report by The Markup, co-published with The Guardian, alleges that Gmail’s auto-sorting feature is pushing huge amounts of presidential campaign emails to secondary inboxes and spam folders, but the truth is a lot more complicated than it would appear. The outlet’s investigation set up a fresh Gmail account and signed it up for presidential candidates’ mailing lists and updates from think tanks, advocacy groups, and nonprofits. The investigation spanned from mid-October to mid-February as the campaign emails piled up in the account — and from this data the site drew conclusions about how Gmail’s algorithms work — and possibly work against certain candidates and advocacy groups.

In The Markup’s testing, the vast majority of campaign emails ended up in either Gmail’s “Promotions” tab or spam folder. The investigation is well intentioned in its concern about Google’s ability to dictate political communications but the data is fundamentally flawed based on the way the account was set up, the way Gmail perceives accounts, and how it treats Gmail’s algorithms as independent of human activity — and, for that matter, of other algorithms.

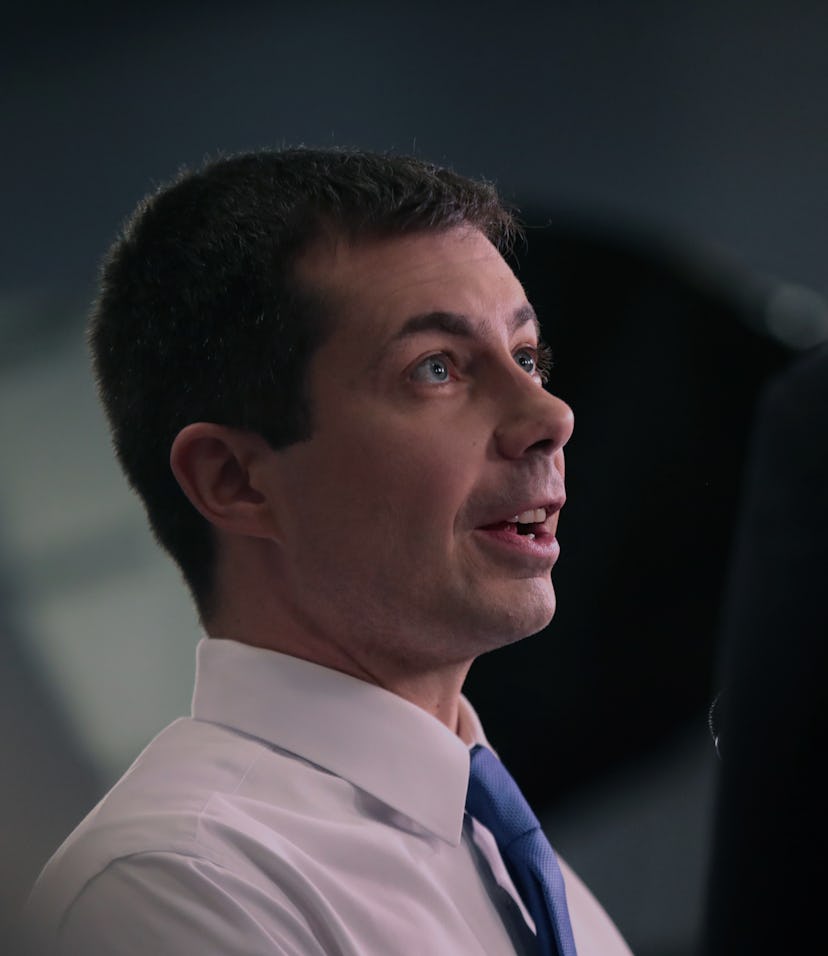

The results are staggering, but don't tell the whole story — The investigation signed up for emails from 16 campaigns using its test account. Of these, only one campaign’s emails — those from Pete Buttigieg — ended up with the majority of messages (63 percent of them) in the “Primary” inbox.

Less than 50 percent of every other campaign’s emails were sorted in the Primary inbox. None of Elizabeth Warren or Joe Biden’s campaign emails were placed in the Primary inbox; most were marked as promotional.

Of the 5,134 emails the test account received from 171 groups and politicians, nearly 90 percent were sorted into either Promotions or spam. On its face, these numbers are worrying, suggesting that Google’s “black box” algorithms are favoring some candidates while shuttling other emails to inboxes that users never interact with or are even aware of. But because of the methodology of this testing, it’s unclear what the results really mean for average users.

So many other factors at play — The Markup did not touch the inbox set up for testing, instead allowing Gmail to auto-sort on what it assumed was a blank-slate algorithm. But even default algorithms take into account a wide variety of factors — an uncountable number, really — that this test doesn’t speak to at all. There’s no such thing as a blank slate. For example: The account has to be associated with an IP address, even if it is hidden using Tor (a privacy-focused network which anonymizes users' IP). Presumably this is an address from which Google can derive other data used in its algorithmic decision-making, but The Markup notes that it used the location of Tampa, Florida, when email-senders requested a location. That is a signal that changes the algorithm. How? We don’t know.

Furthermore, the account was associated with a “new” phone number created by The Markup, but there is no way of knowing if that number had been recycled from a previous owner, and the publication doesn’t specify what area code that number hails from; another complicating factor. The account only signed up for political emails and wasn’t used otherwise — which could be an indicator to Google that this a sock puppet, spam, or abandoned account. Was this account created on a fresh computer? Using what OS? Using what build of Tor? Using what plug ins? In incognito mode? What did other users do with each email? All of these are factors which would impact the algorithm and therefore deflate the very premise of the experiment.

The Markup even states this in its behind-the-scenes post on the story:

Our findings are based on a four-month study of one model email address and a list of political candidates, advocacy groups and think tanks that was robust but by no means exhaustive [emphasis ours].

Our data show how Gmail handled one particular inbox with no user input; it’s how the default algorithm worked in this case without individual user participation [emphasis ours].

Gmail says its algorithms respond to each user’s interactions to adjust categorization in the aggregate and for individual users. If so, email categorization will vary from person to person. It’s unclear to what extent this behavior would affect the categorization of political mail for any given person, but it’s important to note that our findings would not necessarily match where emails end up for a particular Gmail user [emphasis ours].

We did not determine why Gmail categorized certain emails or certain senders’ emails the way that it did.

The average Gmail account is all about “user participation.” The Markup’s testing does not account at all for human activity. Algorithms respond to users’ actions, adapting and evolving as emails are moved around and manually re-categorized. The test account was left untouched, which is simply unrealistic. Why were no other test accounts set up? Why were actual users’ accounts not polled? What voter is leaving all their emails unread and unmoved? This left no room for Gmail’s algorithm to learn which emails should be re-sorted in the future.

“Mail classifications automatically adjust to match users’ preferences and actions,” a Google spokesperson told The Markup. But what if there’s no user involved? Or worse, what if Google thinks something other than a user is controlling the account? The report never answers that question. It doesn’t even seem interested in it.

Algorithms also interact with other algorithms — those of the campaigns themselves, for example. These presidential candidates are doing everything they can to end up in front of a set of human eyes attached to a wallet, and that means gaming the system using tricks and specific targeting associated with the details of the account signing up for messages and how they’ve responded to previous messages. Even the very bulk mail service that the candidate uses for its marketing has an impact on how Google perceives those emails. The Markup doesn’t explain how those factors may have impacted its study. For example, the authors of the report say they received nothing from Donald Trump despite the fact that it seems unlikely that a president seeking reelection isn’t sending out campaign emails. There is no explanation for why no emails were sent to this account and no investigation.

What would this even tell us? — This “experiment” used one account — one! — rather than doing a large (or even small) study of multiple accounts controlling for these factors. You would likely need to repeat this experiment thousands of times to even draw conclusions about trends in political email marketing via Google’s service. Even then, since these algorithms (on both ends!) are constantly changing and updating, the data would be a mishmashed snapshot of different versions of the algorithm over the course of months.

Importantly, this is also not the way algorithms are actually used. Algorithms are optimized to take as much data on the user as they can and then customize their experience to match their needs and desires. There is no such thing as an objective algorithm just as there is no such thing as an objective direction for a car to go in — and drawing conclusions about what would happen to a hypothetical car with nobody behind the wheel is useless even if the resulting pile-up looks very dramatic.

It should also be noted that The Markup reported its results and methodology in seperate posts, which could lead many users to draw conclusions about Google’s handling of email without even seeing how the testing was carried out — which by its own admission, is a small data set that is “by no means exhaustive.”

There is no question that reporting on the unseen decisions at play inside the algorithms of big tech has never been more crucial or important than it is right now, but presenting a lopsided data set and allowing an audience to draw huge conclusions from that data is nothing short of dangerous. Does Google have too much power over the information we see? Certainly. Should the company be scrutinized and analyzed by independent journalists and researchers? Absolutely. Should the government be more involved in the regulation of what Google can do with consumers’ data? Surely.

But throwing around concepts like bias and shadow bans in relation to big tech and politics has, historically, never been more dangerous to do. If you’re going to issue a report that suggests a major technology company has been favoring, suppressing, or outright removing communications from major political figures, the science has to be airtight. This report, unfortunately, simply opens the door to unscientific speculation, rumor-mongering, and broad conclusions drawn from little evidence.