Tech

IBM flexes on Google with new quantum computing technique

The PR pissing contest is heating up.

IBM Research says it has developed a technique for manipulating atoms to create a quantum computing simulator, days after Google claimed it was the first company to achieve “quantum supremacy.”

There’s a pissing contest afoot in quantum computing, despite the fact that most people have no idea why any of this should even matter.

Finding the middle ground — IBM researchers created an analog simulation of qubit behavior with titanium atoms by exploiting their magnetic properties to push the atoms up and down very quickly.

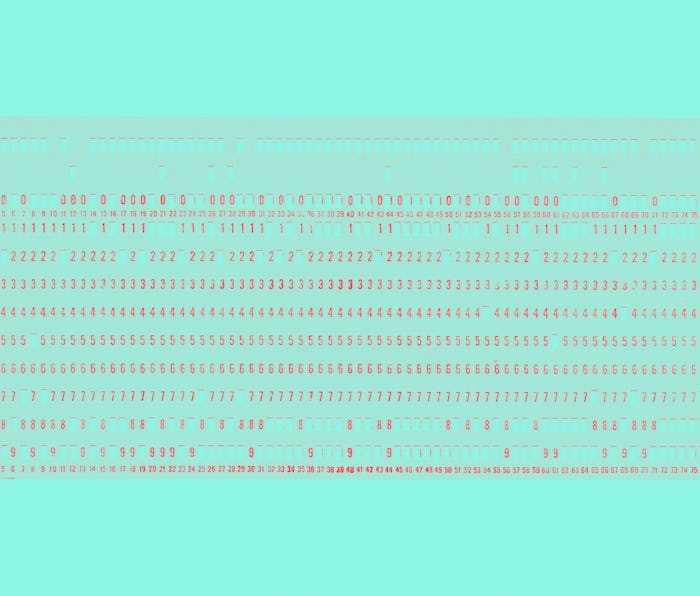

“Like a magnet on a refrigerator, each titanium atom has a north and south magnetic pole. The two magnetic orientations define the “0” or “1” of a qubit,” IBM said in its blog post. The researchers used microwave bursts from the tip of a needle-like instrument to control the atoms, rapidly changing their position and spinning them up and down.

Think about the blurriness of a flipped coin in mid-air — it’s kind of both heads and tails at the same time.

None of this has real-world applications right now, though.

Why is it significant? — Quantum computing is supposed to make calculating anything much faster than ever before. Computers today understand information in the form of ones and zeros, each a “bit.” They have a limited capacity to process bits at any one time, though.

Quantum bits decrease the total amount of bits needed to run a calculation because each qubit can be a one and a zero at the same time. Take guessing a password, for instance. The number each qubit represents changes on the fly, meaning it’d be much faster to test a lot of password variations because you wouldn’t have to run nearly as many attempts.

Qubits, however, are hard to keep in this middle state between one and zero.

A lot of buzz — It’s another advancement in quantum computing research, to gain such control over atoms, but experts themselves aren’t totally clear on what the technology will ultimately be used for. At the very least it’s unlikely to find its way into general-purpose computers. That advancement in power would be wasted on most tasks other than high bandwidth research.

Quantum computing is at a phase not unlike artificial intelligence. It has potential but right now it’s very nascent and more excitement than anything. Much AI is nothing more than humans feeding machines pictures of dogs until they remember what a dog looks like.

John Preskill, the man who coined the term quantum computing, recently told the Los Angeles Times, “people are kind of gearing up, figuring the technology will mature, and they want to be ready. Exactly when we will see real economic impact from quantum computing, nobody really knows.”

That includes IBM and Google.