Tech

ByteDance is apparently pretending there was no deepfake tool slated for TikTok

The code is hidden in TikTok and its Chinese twin Douyin.

Israeli in-app market research company Watchful.ai spotted something new in TikTok and Douyin. A Techcrunch report uses the company’s findings to expose a deepfake feature in both the English and Chinese versions of TikTok. The feature is a version of Snapchat’s FaceSwap with biometric facial scanning more aligned with Apple’s Face ID. TikTok spokespeople told Techcrunch and Gizmodo that there’s no such feature, and a Douyin spokesperson denied that the tool would be a part of TikTok.

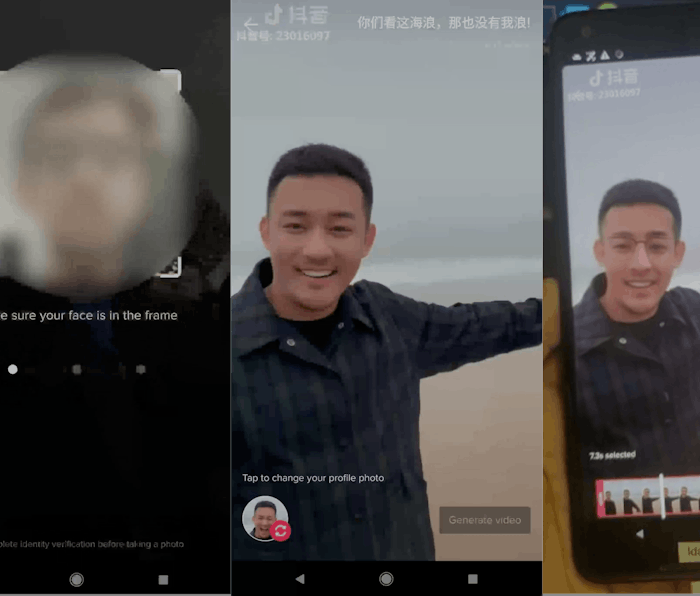

ByteDance’s Face Swap — Watchful discovered this hidden code in the latest Android version of both apps, but the feature isn’t available to the public. The Face Swap tool first asks to scan your face, which doubles as a way for the app to confirm you’re only using your face and not using someone else’s likeness. The scanning process requires nodding, turning, blinking, and opening and closing your mouth.

Once complete, you can place your face on someone else’s in a ByteDance video approved for use. If you want to download and share the video, a watermark will note that it’s a deepfake. Watchful was able to manipulate the code to test it out, and the resulting video looked “quite seamless,” according to Techcrunch reporter Josh Constine.

Read the fine print — Watchful also found updated terms of service for both apps related specifically to this new feature, including a notice that it would not be accessible to minors. Douyin denied the veracity of any update to TikTok’s terms, but didn’t deny its own association with the face-swapping mechanism. At first, TikTok flatly denied all evidence of the tool, but eventually told Techcrunch “The inactive code fragments are being removed to eliminate any confusion.”

Will they or won’t they? — With all the heat from U.S. lawmakers over TikTok’s Chinese connections and loose children’s privacy protections, ByteDance might not want to poke the bear. In October 2019, TikTok was one of 11 social media companies to receive a bipartisan letter pressuring them to curb the rise of deepfakes.

This new report either shows off an attempt to coverup a sensitive feature test or TikTok being completely unaware of such a test. Either way, it doesn’t bode well for public trust in the app.