Tech

A Microsoft employee penned Washington state's sketchy facial recognition law

Microsoft calls the legislative measure "progress," but conveniently downplays who wrote SB 6280.

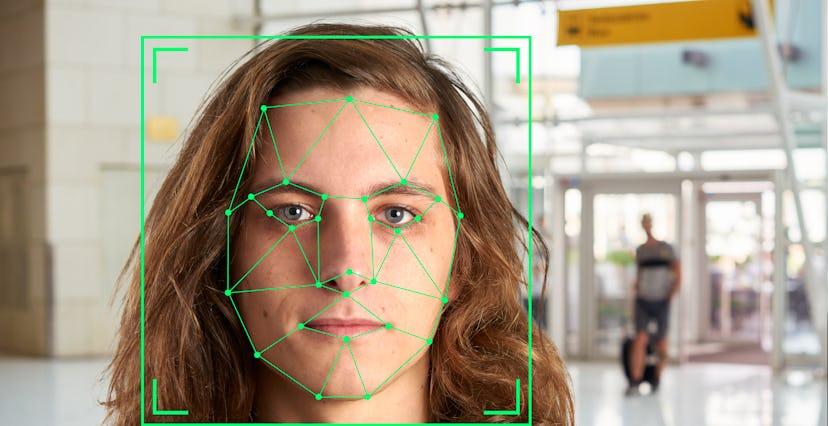

Bill SB 6280 in the Washington State Legislature concerns the use of facial recognition software. As OneZero reports, the bill has been signed into law and takes effect in July in 2021. On the surface, the bill looks like an effective measure to curtail potential abuse of facial recognition technology. A closer look, however, reveals some concerning details that are worth paying close attention to, especially these days, when we're all a little distracted by the pressures of keeping it together in the face of a pandemic and there's a real risk we'll allow legislation to slip by that shouldn't.

Written by a Microsoft employee — Microsoft's president Brad Smith wrote a positive review of the legislative move, suggesting it's set to be a harbinger of enhanced digital privacy in the state of Washington. At one point, Smith says, "When the new law comes into effect next year, Washingtonians will benefit from safeguards that ensure upfront testing, transparency and accountability for facial recognition, as well as specific measures to uphold fundamental civil liberties."

What Smith mentions only much later on in his blog post is that one of the writers of SB 6280, Washington State Senator Joe Nguyen, is a Microsoft employee.

Essential services could be denied — In one of its sections, the bill states that agencies using facial recognition software for decision making are expected to conduct "meaningful human review" when it comes to essential services. These include "housing, insurance, education 39 enrolment, criminal justice, employment opportunities, health care services, or access to basic necessities such as food and water, or that impact civil rights of individuals."

At first blush that sounds great, but "meaningful human review" is loosely defined. So loosely, in fact, Jennifer Lee of the American Civil Liberties Union (ACLU) warns it can easily be thwarted, or could see the biases of the human doing the reviewing come into play and people nonetheless prejudiced and precluded from having access to essential services.

"Alternative regulations supported by big tech companies and opposed by impacted communities do not provide adequate protections," Less says. "[I]n fact, they threaten to legitimize the infrastructural expansion of powerful face surveillance technology."

Nguyen's bill regulates three types of use: persistent tracking, ongoing surveillance, and real-time identification. Beyond that, under the current rules, agencies have carte blanche.

Flimsy rules don't cut it — Lee and the ACLU are calling for a moratorium on facial recognition rather than the "weak" regulations currently proposed. As it stands, the bill lacks comprehensive accountability frameworks and the "accountability reports" laid out in the bill will, most likely, fail to provide guardrails against exploitation.

"There is no enforcement mechanism," Lee concludes. "If agencies choose not to follow the provisions in the bill, nothing will happen to them."