Culture

These feminist chatbots were designed to combat online abuse

The chatbots are the result of a Feminist Internet course.

Feminist Internet, a U.K.-based nonprofit organization, recently ran a five-day course created with the Creative Computing Institute. The course uses “feminist design principles” to create chatbots that tackle the issue of online abuse, especially as it pertains to women and other marginalized communities. Feminist Internet is known for its feminist chatbot F’xa, which deals with AI bias, as well as its work on creating a feminist Alexa.

Bring out the bots — After researching what they specifically wanted to address, storyboarding, and coming up with chatbot personalities, participants of various skill levels coded five chatbots:

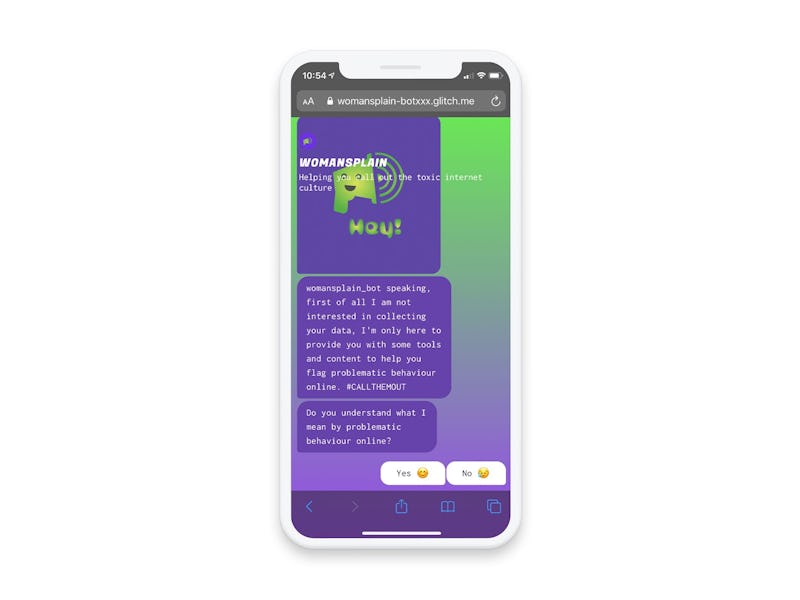

- Womansplain by Calie Calatayud, Kyoungmin Kim, and Georgia Hughes.

- Pocket of Joy by Eunah Lee, Hannah Seddon, Tilly Cullen.

- Cyber Smart Buddy by Judy Chyou and Janet Choi.

- Ayla by Elizabeth Connor and Ipek Demircioglu.

- Ms. Leaky Pipes by Ana Blumenkron and Ellie Stanton.

The bots provide resources and advice on topics ranging from teenage bullying to sending nudes safely to dealing with harassment online.

Who was involved? — Seyi Akiwowo, founder and executive director of Glitch provided an overview of online abuse that differentiated between the kinds of abuse often perpetuated online. Ph.D. student Carolina Are, who specializes in how misinformation and conspiracy theories affect criminal cases, discussed ways legal systems fail to evolve to combat online abuse.

Caroline Sinders, a machine learning designer/user researcher, shared her “Diagram of Trolling” that “plots content on scales from ‘casual to serious’ and ‘harmful to absurdist.’” Azmina Dhrodia, head of operations and founding advisor for the Block Party app, outlined how the app provides social media users with tools to protect their online communications and circumvent harassment.

You can check out what they all had to say in this YouTube playlist; each video is roughly 45 to 60 minutes long.

This is a man’s world — When AI is given a personality, it’s often feminized and filtered through subservient or sassy tropes. Smart assistants like Alexa and Siri are the most popular examples of this. Researchers have been particularly interested in how conversational AI responds to harassment. “These bots do have the capability, if programmed effectively, to reject abuse and promote healthy sexual behavior,” wrote Quartz reporter Leah Fessler in 2017.

The bots created in the course manage to create safe spaces with extremely narrow AI. As this technology gets more complex and we hurtle towards superhuman AI, more of these systems should be able to be, well, a bit more human.

Videos from the course are available on YouTube and organizations can request the course by contacting charlotte@feministinternet.com.