Culture

A bookshelf in your job screening video makes you more hirable to AI

From your background to your outfit, an algorithm’s judgment could make or break your chances of getting hired.

As more employers turn to AI to streamline the hiring process, more and more questions are arising about the ethics involved. Journalists at Bavarian Broadcasting (BR) in Munich, Germany conducted an experiment using a local startup Retorio’s video-analyzing AI to see what factors might affect its perception of job applicants. They found that something as simple as wearing glasses can impact the algorithm’s personality assessment, potentially costing someone a job.

Add it to the list of biased algorithms — The startup analyzes video to determine personality attributes based on the Big Five Model (aka, "Ocean"), which breaks scores down into "openness, conscientiousness, extraversion, agreeableness, and neuroticism."

The team’s first test used a white actress to see how her assessment changed as her appearance did. Wearing glasses made her seem less conscientious while wearing a headscarf made her seem much less neurotic. She underwent several changes to her outfit and hair, all with very different results.

Retorio responded to these findings stating “Just like in a normal job interview, these factors are taken into account for the assessment.” AI is partially used in these processes to omit bias in the initial screening process, so if it’s taking appearance into account, it’s already failing.

In a test that darkened the video of a Black woman, the algorithm determined that she was less agreeable and more neurotic in the filtered video. There are some hints at racial bias here, but even broadly, this could negatively affect anyone who isn’t able to take high-quality videos.

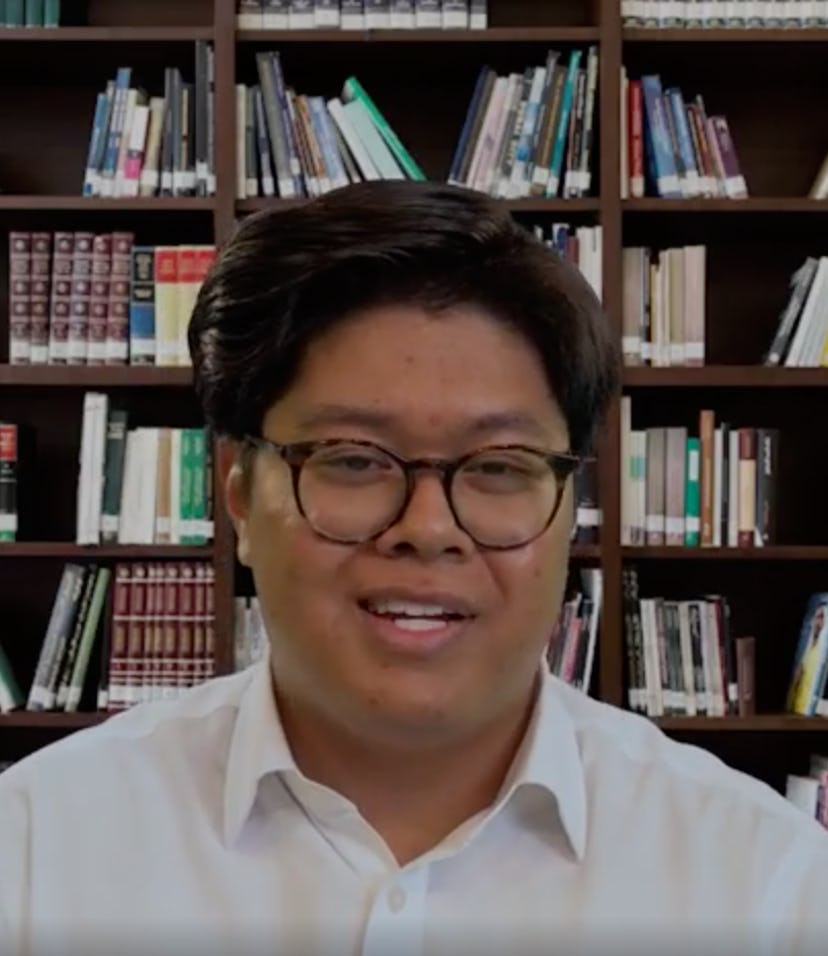

Another finding didn’t even have anything to do with the person. The addition of art or a bookshelf in the background made an Asian test subject seem much more conscientious and significantly less neurotic compared to the same faux applicant in front of a plain background. Changes to the audio for these videos — which allegedly inform the assessment, too — had little effect on the outcomes.

Let’s stop replacing human judgment with AI — It’s no secret that AI has its failings and biases that extend far beyond this startup. HireVue, a similar tool, has been in use in the U.S. for years and is still going strong. In this case, only about 20 percent of applicants receive no human input, but that’s still too many.

Whenever you train AI on a flawed system, that AI will produce flawed results. Most often, that negatively affects marginalized groups, people who have a hard enough time getting a foot in the door as it is. Even Amazon shelved its AI hiring process due to it discriminating against women. Maybe instead of letting an algorithm decide whether or not someone’s agreeable, hiring managers could work to unlearn their biases.