Tech

Google Lens can now combine images and text for better searches

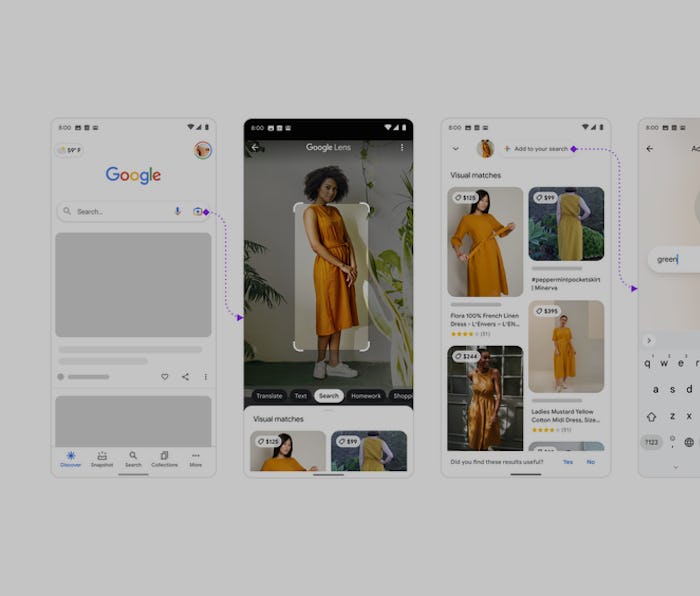

In beta now in the U.S., “multisearch” lets Google use text as added context for an image search.

Google is introducing a new way to handle complicated searches using two sources of information: image and text.

“Multisearch,” lets you add text as context for an image you’re searching with Google Lens, so you can ask for additional information about something you might be able see but don’t know anything about. Google’s examples include trying to look up a tutorial for nail art that might be hard to describe or finding a dress you like in a different color.

Difficult searches — Multisearch combines two types of searches Google already excels at to try and get at information that’s harder to put into words.

Adding context is as simple as snapping a picture in Lens (or uploading your own screenshot) and then tapping the “+” button to add text like “tutorial” or “green,” to narrow down your search. Google’s examples seem to be mostly oriented around shopping for now, but there’s the potential for far more as the company gets better at learning the searcher’s intent.

MUM — Multisearch is just one implementation of Google’s new Multitask Unified Model (MUM) technology it introduced at Google I/O 2021. MUM, according to Google, “understands” information better across different types (like images, text, or video) and different languages. Broadly, you’ll be able to ask more specific, context-based questions, and get more nuanced answers, in fewer searches.

On one hand, multisearch, and whatever other ways Google ultimately applies MUM, means the company will be even better at answering every question you might have. On the other hand, I think there’s a certain amount of pleasure in knowing there are just some things you can’t search for. I like that there are pieces of information that I need to speak to an expert to know. I guess this means I’m old.

Google’s multisearch is still a work in progress. You can access a beta version of the feature in the US on iOS and Android.